Dr. V.K.Maheshwari, M.A. (Socio, Phil) B.Sc. M. Ed, Ph.D.

Former Principal, K.L.D.A.V.(P.G) College, Roorkee, India

Manjul Lata Agrawal. M.A. (History) B.T.

Former Principal S.K.V, Delhi Cantt. Delhi.

HERE ARE FEW HINDU RELIGION TERNS GENRALLY FORGOTTE

ACHAMANA :

This is a rite practiced by those of the

Hindu belief. It is a rite in which a worshipper purifies

himself by thinking of pure things while sipping water

and sprinkling water around him. In some ways it is

similar to the sprinkling of water during a Christian

ceremony. The Hindu, having done this, can then retire

into a peaceful state of meditation.

ADHARMA :

This indicates lack of virtue, lack of

righteousness. The poor fellow probably does not

abstain from any of the Five Abstinences.

AGAMA :

A Scripture, or in Tibet a Tantra. It can be

used to indicate any work which trains one in mystical

or metaphysical worship.

AGAMI KARMA :

This is the correct term for Karma.

It means that the physical and mental acts performed by

one in the body affect one’s future incarnations. It is like a saying as one sows so

shall one reap, which is much the same as saying that if

you sow the seeds of wickedness then you shall reap

wickedness, but if you sow the seeds of good and help

for others then the same shall be returned to you ‘a

thousandfold.’ Such is Karma.

AHAMKARA :

The mind is divided into various parts,

and Ahamkara is the sort of traffic director which

receives sense impressions and establishes them as the

form of correct facts which we know, and which we can call to

mind at will.

AHIMSA :

Apolicy oof peace, of non-violence. It is refraining from

harming any other creature in thought, deed, or word.

AJAPA :

This is a special Mantra. The Easterner

believes that breath goes out with the sound of ‘AJ,’

and is taken in with the sound ‘SA.’ Hansa is the sound

of human breathing. ‘HA,’ breath going out ; ‘N’ as a

conjunction ; ‘SA,’ breath coming in. We make that

subconscious sound fifteen times in one minute, or

twenty-one thousand six hundred times in twenty-four

hours

AJNA CHAKRA :

This is the sixth of the commonly

accepted figure of seven of the known Yogic centres of

consciousness.

Ajna chakra is the Lotus at the eyebrow level, a

Lotus, in this case, with only two petals. This is a part

of the sixth-sense mechanism. It leads to clairvoyance,

internal vision, and knowledge of the world beyond this

world.

AKASHA :

Many people refer to this as ether, but a

rather better definition would be — that which fills all

space between worlds, molecules, and everything. The

matter from which everything else is formed.

ANAHATA CHAKRA :

The symbolism of this

Chakra is The Wheel or The Lotus.

This is a Chakra at the level of the heart. It has

twelve petals of a golden colour.

Below this Anahata centre is another manifestation

of The Lotus, one with an eight-petal arrangement

which stirs and waves slightly when one does

meditation.

The Anahata Chakra is the fourth of the seven

commonly known Yogic centres of consciousness.

ANAHATA SHABDA :

This means a sound which is

not an actually perceived sound. Instead, it is an

impression of sound which is often heard during

meditation when one has reached a certain stage. The

sound, of course, is that of the Mantra Om.

ANANDA :

Pure joy. Joy and pleasure unalloyed by

material concepts. It indicates the bliss and happiness

which one experiences when one can get out of the

body consciously and be aware of the absolute rapture

of being free, even for a time, from the cold and

desolate clay sheath which is the human body on Earth.

ANTAHKARANA :

Vedantaphilosophy, uses this word when referring to the mind

as it is used in controlling a physical body.

APARIGRAHA :

This is the fifth of the Abstinences.

It indicates that one should take the Middle Way in all

things, being not too good but not too bad, avoiding

extremes and being balanced.

ASANA :

This is a posture, or sitting position, and is

used when preparing to meditate.

The Great Masters have tried to create a sensation, tried to

increase their own self-advertised status by ordering

that their Yogic students should indulge in all sorts of

ridiculous and fantastic contortions.

The only thing you have to do in order to meditate is

to sit comfortably, and then you are definitely in the

correct position.

ASAT :

All those things which are unreal or illusory.

This is the World of Illusion, the world of unreality.

The World of the Spirit is the real world.

The opposite of Asat is Sat, that is, those things

which are real.

ASHRAMA :

This means a place wherein Teacher and

pupils reside. Often it is used to denote a hermitage, but

it can also be used to indicate the four main stages into

which life on Earth is divided. Those stages are :

1. The celibate student.( BRAMCHAYA)

2. A married person who thus is not celibate. (GRAHSTHA)

3. Retirement and contemplation.(VANAPRASTHA)

4. The monastic life, and monastic, you may like to

be reminded, indicates a solitary life.( SANYAS)

ASMITA :

Conceit, egoism, and the puffed-up pride of

the unevolved human. As a person evolves Asmita

disappears.

ASTRAL :

This is a term which is generally used to

indicate the place or condition that one reaches when

one is out of the body.

ATMA :

Some people call it Atman. Vedantic

philosophy regards the Atma or Atman as the

overriding spirit, the Overself, the Ego, or the Soul.

AURA :

Just as a magnet has lines of force about it so

has the body lines of force, but these are lines of force

in different colours, covering a wider range of colours

than human sight could ever see without the aid of

clairvoyant abilities.

AVASTHAS :

A word descriptive of the three states of

consciousness which are :

1. The waking state, during which one is in the body

more or less conscious of things going on about one.

2. The dream world, in which fantasies of the mind

become intermingled with the realities experienced

during even partial astral travel.

3. The deep sleep of the body when one does not

dream, but one is able to do astral travelling.

AVATAR or AVATARA :

This is a very rare person

nowadays. It is a person who has no Karma, a person

who is not necessarily human, but one who adopts

human form in order that humans may be helped. It is

observed that an Avatar (male) or Avatara (female) is

always higher than human.

AVIDYA :

This is a form of ignorance. It is the

mistake of regarding life on Earth as the only form of

life that matters. Earth life is merely life in a classroom,

the life beyond is the one that matters.

BHAGAVAN :

A term indicative of one’s personal

God. The God whom we worship irrespective of the

name which we use, and in different parts of the world

different names are used for the same God.

BHAJAN :

A form of worship of one’s God through

singing. It does not refer so much to spoken prayers,

but is specifically related to singing. One can chant

prayers, and that would be Bhajan.

BHAKTA :

One who worships God, a follower of

God. Again, it must be stressed that this can be any

God, it does not relate to any particular creed or belief,

but is a generic term.

BHAKTI :

An act of devotion to one’s God. The act of

identifying oneself as a child of God, as a subject of

God, and admitting that one is subservient and obedient

to God.

BHAVA :

This is being, feeling, existing, emotion.

Among human beings there are three stages of Bhavas :

1. The pashu-bhava is the lowest group of people

2. The vira-bhava are the middle group. They have

ambition and desire to progress upwards. They are

strong, and frequently have quite a lot of energy.

3. This group, the divya-bhava, is of a much better

type, with harmonising people who are thoughtful,

unselfish, and really interested in helping others

unselfishly.

BODHA :

That knowledge which can be imparted to

another person whom one is teaching. It is also referred

to as wisdom or understanding.

BRAHMA :

A Hindu God frequently represented with

four arms and four faces and holding various religious

symbols

BRAHMALOKA :

This is that plane of existence

where those who have succeeded in the Earth life go

that they may commune with others in the next plane of

existence. It is a stage where one lives in divine

communication while meditating on and preparing for

fresh experiences.

BRAHMA-SUTRAS :

All these words come from

India, and the Brahma-Sutras are very famous

aphorisms which place before one the principal

Teachings of the Upanishads.

BREATH :

One should also give it the name of

Pranayama, but as this would mean nothing to the

majority of people, let us be content with the word

Breath.

BUDDHA :

This is not a God, this is a person who has

successfully completed the lives of a cycle of existence,

and by his success in overcoming Karma is now ready

to move on to another plane of existence.

A Buddha is a person who is free from the bonds of

the flesh. The one who is frequently referred to as ‘The

Buddha’ was actually Siddhartha Gautama. He was a

Prince who lived some two thousand five hundred years

ago in

BUDDHI :

A word meaning wisdom, and we must

always keep before us the awareness that wisdom and

knowledge are quite different things

CHAITANYA :

A state when the spiritual

consciousness has just been awakened, and one is alert

and ready to progress upwards, taking the first steps to

leave the causal body behind one.

CHAKRAS :

We should concentrate upon the six

Chakras. Along our spine, like wheels threaded along

our spinal column, are the six main Chakras or centres

of psychic consciousness. There are various centres

which keep our causal body in touch with our higher

bodies, in touch with our higher centres.

CHITTA :

This is the lower mind. There are three

parts of the mind, or it might be better to say mindstuff.

The first is Manas ; the second is Buddhi ; and the

third is Ahamkara. The first, of course, is the lowest.

CONCENTRATION :

This is the art of devoting one’s

full attention to one thing, it may be a physical thing or

an intangible thing, such as an idea.

DEVA :

A Deva is a Divine Being, one who is quite

beyond the human state. Anyone who has attained to

the necessary degree of enlightenment and purity, and

is no longer on this Earth, could be a Deva.

Nature Spirits and manmade thought forms are not,

and cannot ever be Devas of the human type, although

naturally Nature Spirits and Animal Spirits have their

own Group-Devas.

DEVILS :

These people are the negative of the positive

of good. It follows that if there were no devils there

would be no Gods ! If we have a positive we must have

a negative otherwise the positive could not exist.

DHANURASANA :

This Dhanurasana is a Yogic Posture sometimes

termed the Bow Posture

DHARMA :

This word can indicate merit, good

morals, righteousness, truth, or a way of life. Its true

meaning, however, is ‘that which holds your true

nature.’

DHAUTIS :

Dhautis is a system of purification of the physical

body, and does not confer any psychic abilities. Certain

people in India swallow air and expel it forcibly in

various unusual ways. Afterwards they swallow water

and expel that in the same unusual ways.

DHYANA :

This is a meditation or a deep form of

concentration. It is an unbroken flow of thought

towards that upon which one concentrates. It is a word

which in Raja Yoga is known as the Seventh of the

Eight Limbs.

DIKSHA :

This is the art of initiating a student into

spiritual life, and is carried out by the Teacher or Guru

concerned.

DIVINITY :

This is one of the very old original

Sanskrit words. It goes back to the earliest days of

Mankind. It means ‘to shine.’ Often a Diva or a

Godlike person will be known as ‘The Shining One.’

DREAMS :

One of the most misunderstood subjects of

all. Because of Western Man’s conditioning Western

Man can rarely believe in astral travelling and such

things, thus it is that when the astral body rejoins the

physical body complete with a lot of most interesting

memories

DWAPARAYUGA :

Throughout the world in world

religions there are various systems which divide the life

of this world into different periods or cycles

DWESHA :

This is aversion, dislike as opposed to

like. It goes back into the memory department. If we

have had a severe shock we dislike that which caused

the shock, and we try to avoid getting such shocks in

the future.

ETHERIC DOUBLE :

This is the substance existing

between the physical body and the aura. The etheric is

of a bluish-grey colour, and is not substantial like flesh

and bone. The etheric can pass through a brick wall,

leaving both intact.

The etheric double is the absolute counterpart of the

human flesh and blood body, but in etheric form. The

stronger a person’s physical, the stronger will be the

etheric. When a person dies, and that person has had a

certain gross interest in life, his etheric double is

physically very strong and he leaves a ghost which,

through habit, acts in precisely the same way as the

person did while in the physical body.

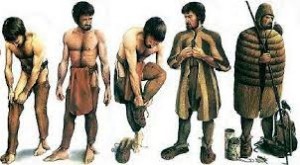

EVOLUTION :

Everything is in a state of evolution.

A child is born as a helpless baby, and gradually

evolves into an adult. People go to school, and their

evolution is such that they progress from class to class.

Men do not become angels on the earthly stage of

evolution any more than animals turn into humans on

this world. All must evolve according to the plans of

the Universe, and according to their own species.

The development of Man, or Mankind, has been

proceeding for many millions of years.

EXPERIENCES : Many people during their time upon

Earth have ‘experiences.’ They imagine they see things,

or they actually do see things. They could be surer if

they kept more accurate reports.

FAITH :

Faith is no idle, senseless, ignorant belief. Faith

grows and grows as one explores that in which one has

faith.

FEAR :

One of the greatest dangers in any form of

occult study is of being afraid. In the East teachers tell

the pupil, ‘Fear not for there is naught to fear but fear.’

Fear corrodes our abilities for clear perception. If we

are not afraid, nothing whatever can hurt us or disturb

us. Therefore — fear not.

GAYATRI :

This is the name given to a most

important Mantra. Christians recite The Lord’s Prayer,

which, after all, is just a Christian Mantra. The Hindu

recites the Gayatri.

A Hindu will go through certain ceremonies, and

then recite this Mantra daily. Here are the actual

words : ‘Om, bhur, bhuvah, swah. Tat savitur varenyam

bhargo devasya dhimahi. Dhiyo yo nah prachodayat.

Om.’

The meaning of this translated into English is : ‘We

meditate upon the ineffable effulgence of that

resplendent Sun. May that Sun direct our understanding

for the good of all living.’

GHOST :

That eerie thing which swishes around in the

night with a few creaks and groans, and which causes

the hair on our heads to stand straight up, is harmless !

A ghost is just an etheric force which wanders about

according to the habits of its previous owner, until

eventually that etheric force, that etheric double, is

dissipated

GRANTHIS :

This peculiar word means a form of

knot. There are three ‘knots,’ the basal, the heart, and

the eyebrow knot.

In time everyone has to raise the Kundalini in order

to progress spiritually and metaphysically. Raising the

Kundalini means that one has to break through these

knots, it means that one has to break free from physical

lusts, free from physical desires and spites.

GURU :

That wondrous, misunderstood word merely

means ‘A weighty person.’

A Guru means in its commonly accepted term, One

whose words are worthy of consideration. A Guru is a

Teacher, a spiritual Teacher, and he should be an

illumined soul, one who has raised the Kundalini and

knows how to raise it in others.

GURUBHAI :

This refers to any male person studying

under the same spiritual Teacher. One should also give

the name applying to a female because nowadays the

ladies, the so-called weaker sex, are often the stronger

sex when it comes to spirituality. So, ladies, if you

study under the same spiritual Teacher you are a

Gurubhagini.

Gurus are often referred to as ‘Master.’ That is

completely and absolutely and utterly wrong. A Guru is

a Guru, ‘a weighty counsellor,’ not a Master

HALASANA :

This is sometimes referred to as the

Plough Posture. It should be emphasised again that all

these exercises really do not do anyone any good.

Sometimes it is claimed that it develops spiritual

discipline, but if one already has the discipline

necessary to tie oneself in a knot, then surely that

discipline can be directed into far more useful channels.

HARI :

Sometimes people call Vishnu by that name,

but actually Hari means ‘to take away.’

The mistake arose in an original translation because

Vishnu was alleged to remove sins and faults by love

and wisdom. Actually, of course, we can only remove

faults and sins ourselves by adopting the right attitude

to life, and towards others.

There are other meanings attached to Hari.

HARI BOL :

This means ‘chant the name of the Lord

that ye may be purified and your sins may be washed

away.’

HARI OM :

This meaning of Hari is that of a sacred

syllable, or actually, to be strictly correct, sacred

syllables.

HATHA-YOGA :

This is just a series of exercises, a

system of physical exertion. It is meant to give one

mental or spiritual discipline, or something like that,

but it is concerned only with postures of the body and

need not be taken in any way seriously. It should be

borne in mind that the true Masters of the Occult, the

true Adepts, never go in for this Hatha-Yoga stuff.

According to the people who do try these stunts, ‘Ha’

means the sound of a breath going in, and ‘Tha’ is the

sound of the breath coming out.

HYPNOTISM :

Most people do not realise the terrible

force latent in hypnotism. Hypnotism should never,

never, never be used except under the most stringent

conditions.

AUTO-HYPNOTISM :

This is a process under which

a person is able to disassociate the conscious and the

sub-conscious, and in which the conscious part of one

acts as the hypnotising agent.

ICHCHHASHAKTI :

It is the special power which enables the Adept, who

is breathing correctly, to accomplish levitation.

Levitation is quite possible, and rather easy to do,

especially if one really has a sound reason for it.

This ‘will-power’ is that which enables us to see into

the future, or into the probable future, and which

enables us within a limited extent to pre-order future

occurrences. It is the power by which so-called

‘coincidences’ take place.

ISHWARA :

Some people use this word as meaning,

or indicating, God. This is particularly so among the

Brahmans.

JAGRAT :

This refers to the waking state, being

awake in the body as opposed to being asleep in the

body. Being in a condition where one is aware of that

which is occurring about one, where one is able to see,

to hear, to speak, to feel, etc.

JAPA :

A word which means ‘repetition.’ It has

nothing at all to do with meditation, but merely

indicates that one repeats a word with the idea that

perhaps one can get help from other sources.

JNANA :

This is knowledge, awareness of life beyond

the life of the world. It is knowledge of the Overself,

knowledge of why one comes to the Earth, what one

has to learn, and how one has to learn it. It is the

knowledge that although an Earth life may be a terrible,

terrible experience, yet it is just the twinkling of an eye

in the time of the Greater Life.

Poor consolation while we are down here !

JNANI :

This is a person who knows, a person who

follows the road of knowledge, one who tries to reach

to the Greater Reality and to escape from the shackles

and pains of life on Earth. A person who can approach

this stage is indeed approaching liberation or

Buddhahood.

KAMA :

This is desire, a craving. It is a memory of the

pleasures and the pains previously experienced. Often

these memories are the causes of habits such as

smoking or drinking.

KLESHA :

Actually there are five Kleshas because

these are the names of the five main things which cause

people trouble, cause people to come back to Earth time

after time until they haven’t any more Kleshas.

KOSHA :

This is a covering or sheath. Sometimes it is

termed a container. There are five Koshas described in

certain Upanishads. These are located each within the

other. The inner one is the body which is fed by food,

that is, the physical body, and if you want the Eastern

name for it, it is Annamayakosha.

The second is the body of Prana, and this is the part

which keeps mind and body together. The Eastern

name for it ? Pranamayakosha.

Third, we have the sheath of the mind which has the

sense impressions. This contains the higher and lower

minds. The Eastern word is Manomayakosha.

Fourth is the sheath, or body, of intellect or wisdom.

This is the start of the Buddhi, and the Eastern name for

this fourth Kosha is Vijnanamayakosha.

The fifth Kosha is the body of bliss, and which often

is referred to as the Ego. It is ‘A Sheath of Joy,’ and the

Eastern name is Anandamayakosha.

KRIYA YOGA :

This is a branch of Yoga which has

three sections. The first section enables one to control

the body and the functions of the body.

The second section gives one the ability to study

mental things and to develop the memory so that one is

able to obtain from the sub-conscious all that which one

has previously learned.

The third gives one a desire to be attentive to one’s

spiritual requirements

KUMBHAKA :

This is a special form of breathing, a

special method or pattern of breathing. It is the

retention of the breath between breathing in and

breathing out, and much benefit can be obtained from

practicing according to certain fixed rules.

KUNDALINI :

This is a life force. It is THE life force

of the body. Just as a car cannot run without having

electricity to fire the mixture in the cylinders, so

humans cannot live in the body without the life force of

Kundalini.

In Eastern mythology the Kundalini is likened to the

image of a serpent coiled up below the base of the

spine. As this special force is released, or awakened, it

surges up through the different Chakras and makes a

person aware of esoteric things. It awakens

clairvoyance, telepathy, and psychometry, and enables

one to live between two worlds, moving from one to

the other at will without inconvenience.

LIBERATION :

The Eastern term is Moksha, so it

will be better to refer to that term, Moksha, for the

meaning of liberation.

LILA :

Some sects of Eastern belief are of the opinion

that God, a great Being whom no one can fully

visualise nor comprehend, created the world and all

other worlds, and all that are within those worlds, as a

plaything, and parts of God entered into the puppets

who were the humans, the animals, the trees, and the

minerals. So the essence of God thus could live as all

living creatures, gaining experience from the

experience of all creatures.

LINGA :

Actually this is a sign representing Shiva, but

it is also used to indicate a phallic symbol.

In the days of long ago the peoples of the Earth had

the most interesting task of populating the Earth as

quickly as they could. Hence it is that the priests, who

thought that the more subjects they had the more power

they would have, made an order and called it a Divine

Order

LOKA :

A Loka is a plane of existence, a plane which

is a complete world to one who is there. We, upon this

Earth, are solid creatures to each other. ‘Ghosts’ are

solid creatures to other ‘ghosts.’ Everything is solid and

substantial to creatures, or beings, or entities who are

going to exist in that particular world or plane of

existence.

LOTUS :

The Lotus symbolises many things to the

Easterner. It is a sacred symbol of Far Eastern religion

The Lotus is a plant which grows on the dirtiest and

muddiest of water, it grows in the foulest surroundings,

and yet no matter how foul those surroundings, the

Lotus remains pure and unsullied and quite

uncontaminated by that which is around it.

MANAS :

This is the thought power of a human.

Human beings have certain power in the same way as a

storage battery has power

MANTRA :

Actually a Mantra is a particular name for

God, but by common usage it now is taken to mean

something else ; it is a form of prayer, it is the

repetition of something sacred whereby one gains

power. If one repeats a Mantra conscientiously and

reverently one attains to purification of thought.

A Mantra should only be used for good, and never

for bad, for there is an old saying that ‘He who digs a

grave for another may fall in it.’ Thus it is that Mantras

should only be used for good, they should only be used

unselfishly and to help others.

OM :

This is known as a word of power. When it is

uttered correctly, and with the appropriate force

according to the circumstances, it confers great benefit

on the utterer. The pronunciation is ‘OH-M.’

RISHI :

This is a Saint, or a good-living person, or one

who has mediumistic abilities.

Usually a Rishi is a person who has in some way

been responsible for the Sacred Scriptures of a religion.

Rishi — an inspired seer.

SADHU :

A holy man, maybe a hermit, but particularly

a monk. A person who leaves a lamasery or monastery

and wanders among the people is given the term

‘Sadhu’ in much the same way as among Christians a

similar person would be called ‘Father’ or ‘Reverend.’

SAHASRARA :

This is the highest of the physical

centres of Yogic consciousness. It is the seventh, and

although, as previously stated in this book, there are

nine centres, only seven are named in the West.

Sahasrara is also called The Thousand-petalled

Lotus

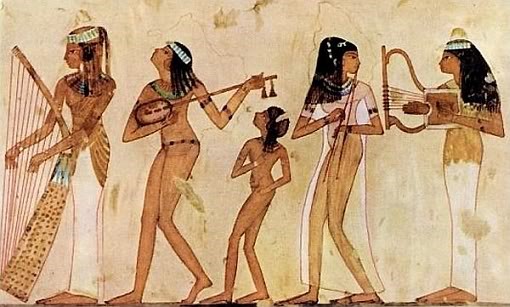

SARASVATI :

Most religions have ‘a Divine Mother.’

There is a Divine Mother of the Christian belief, a

Divine Mother of the Lamastic belief, and a Divine

Mother as a consort of Brahma.

Sarasvati is the Goddess of Learning and the Patron

Saint of the Arts.

SAT

It is the reality, the Overself, that which we shall

become if we behave ourselves and wait long enough.

SATYA :

This means truthfulness, and abstention from

deceiving others. It is known as the Second of the

Abstinencies. One must be completely truthful,

completely honest with oneself, as with others, if one is

to make progress.

SATYA YUGA :

This is the first of the four-world

periods. Various religions divide world periods into a

certain number of years, and Satya Yuga, also known

as Krita, divides the periods into 1,728,000 years.

SHAKTI :

Here again we have the Mother of the

Universe. The Mother is the principle of Primal Energy.

She is that which creates, preserves and ends the

Universe. It is, also, the forces seen in the manifested

Universe.

SHANTI :

In lamaseries and Buddhist monasteries the

word Shanti, which means peace, will be repeated at

the end of a discourse.

SHIVA :

This is a word with many meanings. In the

Hindu trinity of Gods it means the God who dissolves

us from the Earth, the power called the destroyer which

releases humans from the earth-body. It is a ‘God’

venerated by Yogis who seek release from the flesh.

We have three forms, which is birth, life, and death.

There is a ‘God’ which determines when we shall be

born. There is a ‘God’ which supervises us during life,

and there is a ‘God’ (Shiva) which gives us release

from Earth in the form of death.

SIDDHA :

This is one who has progressed through the

various cycles of incarnations, and is now a ‘Perfect

Soul,’ one who has not yet reached the stage of actual

Divinity, but who is progressing and is therefore at the

stage of semi-Divinity.

From this we have :

SIDDHI : This means spiritual perfection. It also

means that one has considerable occult power.

SOUL : A much misunderstood word. It is our Ego,

our Overself, our puppet master, the real ‘I.’ That spirit

which is using our flesh body in order to learn things on

Earth which could not be learnt in the spirit world.

SRI : This merely means ‘Reverend,’ or ‘Holy,’ when

it is used as a prefix to a holy personality or a sacred

book. Otherwise it is used in much the same sense as

English people use the word ‘Esquire,’ or the

Americans use ‘Mr.’

SRIMATI : A form of address prevalent in India. It is

the equivalent of ‘Miss’ or ‘Mrs.’ or ‘Senorita’ or

‘Senora.’ There is nothing mystical, nothing religious

about it, it is just a generic form of address for ladies

with or without culture.